One angle of attack on Clayton Christensen’s disruption theory is that it’s often stretched beyond it’s range of applicability, to the point of becoming unfalsifiable. For example, Benedict Evans tweeted: “‘Disruption’ Christensen reminds me a little of Marxist historians. Over-enamoured of the One True Theory, tempted to make the facts fit it.” And more recently: “Popper’s notes on predictive theories in social science (always seemed to me to fit Disruption very well).” Then he quotes from Karl Popper’s famous paper Science as Falsification:

The Marxist theory of history, in spite of the serious efforts of some of its founders and followers, ultimately adopted this soothsaying practice. In some of its earlier formulations (for example in Marx’s analysis of the character of the “coming social revolution”) their predictions were testable, and in fact falsified.[2] Yet instead of accepting the refutations the followers of Marx re-interpreted both the theory and the evidence in order to make them agree. In this way they rescued the theory from refutation; but they did so at the price of adopting a device which made it irrefutable. They thus gave a “conventionalist twist” to the theory; and by this stratagem they destroyed its much advertised claim to scientific status.

Now to be fair, Evans has also said Disruption theory can be a very useful lens for understanding technology. So my take is he’s just against going overboard with it. I really like this line of reasoning. But when I tried to apply a Popperian frame to Disruption theory, and tossed in some Charles Darwin along the way, I would up concluding that Disruption theory’s ultimate and ironic measure of success would be falsifying itself. This conclusion seems a bit odd, so let’s take it one step at a time.

First let’s go over a quick reminder of Clayton Christensen views on innovation. I’ll use his definitions from this Capitalist’s Dilemma interview, where Christensen breaks innovation into three types:

- Empowering or Disruptive Innovation (camera phones over film cameras, PCs over minicomputers over mainframe computers)

- Sustaining Innovation (hybrid cars like the Prius over conventional cars)

- Efficiency innovations (logistical cost savings innovations for cheaper manufacturing of existing products)

We are of course concerned with the first type: disruptive innovation. Now from Horace Dediu’s excellent Disruption FAQ, we see that disruptive innovations can be broken into “new market disruption” (this is the classic version, such as camera phones and PCs above) and “low-end disruption” (steel mini-mills). And with that terse reminder, I’d suggest you pause to read the quite good Wikipedia page on disruptive innovation if needed. Or go to Horace Dediu’s FAQ for more depth.

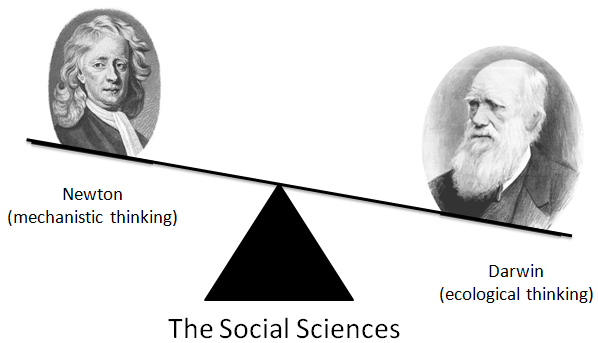

Back to Popper. Popper criticized Marxism as a psuedoscience, one that naively imitated the methods of the hard sciences. Marx tried to create a Newtonian physics for society, where hidden laws of class conflict mechanistically drive society down a determined and predictable path. Marx was wrong of course. Mechanistic Newtonian thinking doesn’t work for social science. Even today you’ll hear people talk about “social forces”. Sometimes that Newtonian wording is convenient. But really. Forces are for particles, not people. When we talk about people we are talking about massively complex groups interacting in ways that evolve and change over time. That is, we’re talking in the style of Charles Darwin. A far better model. For the social sciences, Darwin’s style of ecological thinking trumps Newton’s mechanistic thinking.

Of course Karl Popper famously changed his mind on whether the theory of natural selection was scientific. From Popper’s 1977 Darwin Lecture:

I have in the past described the theory as “almost tautological”, and I have tried to explain how the theory of natural selection could be untestable (as is a tautology) and yet of great scientific interest. My solution was that the doctrine of natural selection is a most successful metaphysical research program. It raises detailed problems in many fields, and it tells us what we would expect of an acceptable solution of these problems. I still believe that natural selection works in this way as a research program. Nevertheless, I have changed my mind about the testability and the logical status of the theory of natural selection; and I am glad to have an opportunity to make a recantation. My recantation may, I hope, contribute a little to the understanding of the status of natural selection.

And:

The theory of natural selection may be so formulated that it is far from tautological. In this case it is not only testable, but it turns out to be not strictly universally true. There seem to be exceptions, as with so many biological theories; and considering the random character of the variations on which natural selection operates, the occurrence of exceptions is not surprising. Thus not all phenomena of evolution are explained by natural selection alone. Yet in every particular case it is a challenging research program to show how far natural selection can possibly be held responsible for the evolution of a particular organ or behavioral program.

Now we have to unpack the language in this second paragraph. Popper is using “natural selection” is a way most biologists today would not, where he means a type of pure natural selection where only mutations that provide a very direct immediate benefit to an organism get selected for and passed on. This would eliminate sexual selection (peacock’s tail) or genetic drift. Both of which don’t provide immediate direct utility, though they can evolve. With that aside, the larger point is Popper considers evolution by natural selection a science. But one which deals with such massive contingent complexity that it has exceptions. So it’s predictive powers are imperfect. Sounds a lot like Disruption theory.

If you read Clayton Christensen’s discussion of how he builds and tests models of disruption theory (via Horace Dediu), I see he follows a methodology Popper would approve of. He tries to falsify. He tests his theories by looking for anomalies. As university scholarship, this approach is perfect. Keep it up. But recall Popper teaches us the social sciences will have exceptions. So I was a bit surprised by this article saying “Disruption Theory blindly predicted if new businesses would survive or fail with 94 percent accuracy and over 99 percent statistical confidence. Holy crap.” My first reaction was to wonder whether disruption theory should be simplified to make it less predictive. Why? Let me quote from a favorite essay called “How I Work” by another influential social scientist, economist Paul Krugman:

[A]lways try to express your ideas in the simplest possible model. The act of stripping down to this minimalist model will force you to get to the essence of what you are trying to say (and will also make obvious to you those situations in which you actually have nothing to say). And this minimalist model will then be easy to explain to other economists as well.

I have used the “minimum necessary model” approach over and over again: using a one-factor, one-industry model to explain the basic role of monopolistic competition in trade; assuming sector-specific labor rather than full Heckscher-Ohlin factor substitution to explain the effects of intraindustry [sic] trade; working with symmetric countries to assess the role of reciprocal dumping; and so on. In each case the effect has been to allow me to tackle a subject widely viewed as formidably difficult with what appears, at first sight, to be ridiculous simplicity.

The downside of this strategy is, of course, that many of your colleagues will tend to assume that an insight that can be expressed in a cute little model must be trivial and obvious — it takes some sophistication to realize that simplicity may be the result of years of hard thinking.

The true brilliance of Clayton Christensen is precisely this. Years of hard thinking to produce an elegant and straightforward model. His influence on the business world is richly deserved. What’s less clear is how much farther disruption theory should be taken down the path of complexity. For serious scholarship as far as possible I suppose. But every step towards complexity increases the risk in creating theoretical epicycles, ad hoc justifications that weaken the power of the simpler theory for small gains in predictive power.

In fact it’s worth asking how the predictability of disruption helps us in the first place. The most recent episode of Ben Thompson and James Allworth’s excellent Exponent podcast asks “is society better off when corporations try to innovate, or would we be better off if we left the innovation to startups?” That is, if disruption is predictably inevitable, would society be better off if large corporations stopped wasting money trying to fight it? One way to think about this is every new startup is an experiment. And the market economy itself is an ongoing experiment that uses price information to coordinate and share knowledge. (Yes, since we’re doing Popper, why not Hayek as well.) The twist here is because people are reading Christensen’s disruption books, his social science has become a meta-experiment. The people involved are now aware of disruption and change their behavior accordingly. The new question: can disruption theory provide enough knowledge so that it’s own predictions become false? Not yet perhaps. But this is a longstanding line within Christensen’s own research. So eventually, why not? If that’s the case, then the answer is yes, companies should try to avoid disruption if they think they can do it. Many will fail (Borders). But some will succeed (Adobe creative cloud). We should think of companies that try but fail to avoid disruption in the same way we think about failed startups. Honorable attempts adding to our pool of knowledge. Costly to the firms themselves, but beneficial to society. Especially since the productivity upsides from successful experiments dominate the losses from failures. In fact Christensen, like everyone, has blind spots. For example Ben Thompson (among others) has noted how Apple and the high end consumer market appear to be exceptions to Christensen’s models. So it’s quite possible the organizational solution to avoiding disruption will come from a company building on Christensen’s insights rather than one following the letter of his ideas. And that’s fine.

Let’s formalize this. Here is Steve Denning talking about ways to avoid disruption:

Here we have the key to solving the puzzle of disruption—a key that doesn’t appear in Lepore’s article. A different kind of management is needed to deal with disruption. The “good management” that leads to bad results is obsolete management premised on what even Jack Welch has called “the world’s dumbest idea”: maximizing shareholder value. It’s the pursuit of this idea that leads to the shedding of items from the balance sheet even if they are essential for innovation and all the other management practices that lead to disruption.

Thus disruption is a symptom, not the underlying disease. This is why the theory of disruption by itself offers no cure. To get to a cure, we have to follow Roger Martin’s thinking to get to the root cause of the problem: discarding the goal of shareholder primacy and replacing it with the goal of delighting customers.

And to succeed with that goal, corporations need to be implementing all other management principles required to delight customers—a shift from controlling individuals to enabling teams networks, and ecosystems; a shift from bureaucracy to agility and dynamic linking; a shift in values from the primacy of efficiency to the primacy of continuous improvement and transparency; and a shift from one-way top-down communications to multi-directional conversation. This is Management 101 for the 21st Century.

In effect, welcome to the Creative Economy! More than a score of books have been written about it. Some large corporations, and very many small and medium size corporations, are already practicing these very different principles of management.

Now of course as a CEO “discarding the goal of shareholder primacy” sounds like a great way to get fired. But let’s keep our experiment hat on for just a bit longer. Some companies will try this out. Some will not. So what might cause creative or mission driven companies to become more successful, along the lines of Apple? Well, one way that could happen is if disruption becomes far more common than it’s historically been.

John Hagel argues exactly that. While globalization has been a big factor for increasing disruption since the end of the cold war, Hagal argues Moore’s law is starting to play a larger role. Quote:

One of the metrics in our Shift Index looks at what economists call topple rate – the rate at which leaders fall out of their leadership position. In this case, we focused on the rate at which public US companies in the top quartile of return on assets performance fall out of this leadership position. Between 1965 and 2012, the topple rate increased by 40%.

In 1937, at the height of the Great Depression and certainly a time of great turmoil, a company on the S&P 500 had an average lifespan of 75 years. By 2011, that lifespan had dropped to 18 years – a decline in lifespan of almost 75%.

And:

Digital technology is different – in fact, it’s unprecedented in human history. It’s the first technology that has demonstrated sustained exponential improvement in price/performance over an extended period of time and continuing into the foreseeable future (based on interviews with scientists and technologists pushing the boundaries of this technology).

So, there’s no stabilization in the core technology components of computing, storage and bandwidth. As a result, there’s no stabilization in infrastructure – cloud computing is simply the most recent manifestation of this infrastructure and it certainly won’t be the last. And therefore there’s no stabilization in terms of how companies can use this technology to create and capture value.

But there’s more. This exponentially improving digital technology is spilling over into adjacent technologies, catalyzing similar waves of disruption in diverse arenas like 3D printing of physical objects, biosynthesis of living tissue, robotics and automobiles, just to name a few. The advent of exponentially improving technologies in an expanding array of markets and industries only increases the potential for disruption. We’ve explored this expansion of exponential technologies in a working paper here.

Saying a technology is “unprecedented in human history” seems over the top. What about fire and the invention of farming? But to be honest I think this is exactly right, especially if we restrict ourselves to technologies invented since the industrial revolution. It’s hard to overstate how extraordinary Moore’s law 50 year long run of exponential improvement has been. It’s scary and exhilarating at the same time. For example see my post noting that as software eats the world, we move from an age of oligopolies to an age of natural monopolies.

Another factor to take into account is in poor societies job satisfaction is less important than paying for necessities like food and shelter. But as a society gets richer, people can more readily afford to be choosy about where they work. This is a real rich world problem. As the competition for people becomes more fierce, mission driven organizations have an economic edge in attracting top talent. So an indirect effect of wealth may be fewer companies focused on squeezing their next quarter’s numbers and more focused on their mission. In an increasingly disruptive age, we may ironically find disruption theory’s ability to predict the future has greatly diminished.

Very interesting! Maybe you could also say that if there’s a way to predict the future, companies will use it and make the future unpredictable again. Isn’t this exactly what makes stock prices unpredictable?

I’m reminded of Alan Kay’s maxim that the best way to predict the future is to invent it. Companies like Apple that are inventing the future are in the best position to predict the future. Since they understand the concept of disruption and can try to avoid it, that by itself robs the rest of the world of much predictive power from using the same concept. With poorer information about what is possible and how markets are changing, how can analysts compete with insiders in predicting when the bases of competition have changed?

You mention Moore’s law as an engine of constant change. That reminds me of Richard Feynman’s maxim that a big enough quantitative change constitutes a qualitative change. Tech is really a new industry every few years! This makes it difficult to make predictions.

Finally, I agree that evolution is a good model for innovation and economic competition. Disruption theory is basically an attempt to predict the outcome of evolutionary experiments, which is not generally possible. Like disruption theory, the evolutionary principle is wonderful for understanding why biological innovations were effective historically, but doesn’t help much in figuring out which innovations are coming next, how they will interact, and when/how to change your own behavior to adapt.