The past few decades have seen a lot of excellent research into figuring out why partisan cultural battles never seem to get to agreement. Of course David Hume was on to this centuries ago, famously claiming that “Reason is, and ought only to be the slave of the passions, and can never pretend to any other office than to serve and obey them.” What’s great is this newer research is scientifically investigating exactly how and why reason is so often the slave to the passions. Dan Kahan (pictured above) is doing research into cultural cognition. He posits that cultural values drive perceptions of risk. And this makes information conflicting with cultural values appear risky, so it’s easily dismissed.

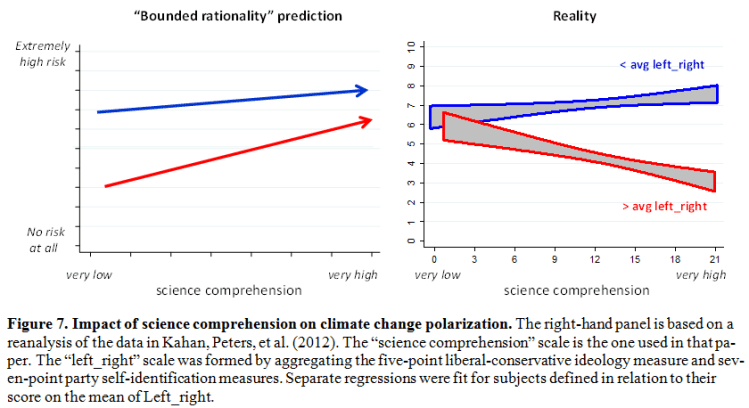

Kahan’s work on climate change is especially good. As Kahan explains, one simplistic theory for climate change denial is scientific literacy. If more and better facts are presented, people will change their mind. Of course that doesn’t work. Presenting facts which contradict existing beliefs on ideologically charged issues tends to ironically strengthen those beliefs. A more sophisticated version of this argument is the “bounded rationality thesis” or BRT, where people deny climate change because they use heuristic shortcuts instead of painstaking logical reasoning. Kahan writes in a rather terse style, but it’s worth quoting him on BRT directly:

BRT is very plausible, because it reflects a genuine and genuinely important body of work on the role that overreliance on heuristic (or “System 1”) reasoning as opposed to conscious, analytic (“System 2”) reasoning plays in all manner of cognitive bias (Frederick 2005; Kahneman 2003). But many more surmises about how the world works are plausible than are true (Watts 2011). That is why it makes sense for science communication reasearchers, when they are offering advice to science communicators, to clearly identify accounts like BRT as “conjectures” in need of empirical testing rather than as tested “explanations.”

BRT generates a straightforward hypothesis about perception of climate change risks. If the reason ordinary citizens are less concerned about climate change than they should be is that that they over-rely on heuristic, System 1 forms of reasoning, then one would expect climate concern to be higher among the individuals most able and disposed to use analytical, System 2 forms of reasoning . In addition, because these conscious, effortful forms of analytical reasoning are posited to “counteract the emotionally comforting desire for confirmation of one’s beliefs” (Weber & Stern 2011), one would also predict that polarization ought to dissipate among culturally diverse individuals whose proficiency in System 2 reasoning is comparably high.

Let me unpack this. If heuristic shortcuts are why people deny climate change, then people with strong reasoning skills and science comprehension should be less likely to be deniers. So the prediction would be the chart on the left below. Both liberals in blue and conservatives in red would see more risk from climate change as their science comprehension get better. The reality is the quite different. The chart on the right. Conservatives who understand science better see less risk from climate change. You can click to see a large version of the chart.

Kahan explains this by noting:

But because positions on climate change have become such a readily identifiable indicator of ones’ cultural commitments, adopting a stance toward climate change that deviates from the one that prevails among her closest associates could have devastating consequences, psychic and material. Thus, it is perfectly rational—perfectly in line with using information appropriately to achieve an important personal end—for that individual to attend to information on in a manner that more reliably connects her beliefs about climate change to the ones that predominate among her peers than to the best available scientific evidence (Kahan, 2012).

That is to say, it’s rational to use your powers of reason to help you fit in with your peers, since that’s more important than being correct in the abstract. This is of course a humbling insight. Social norms trump reason. Fortunately Kahan notes this problem only impacts narrow areas of life, where cultural identity issues are most contentious. So things like climate, nuclear power, abortion, contraceptives (Hobby Lobby). Less so for every day topics. Except sports of course. And Apple. I know you’re now thinking this doesn’t apply to you. I did at first. Not any more. But let’s say you think this only applies to Conservatives. Or dumb non-science people (despite the evidence that smarter science types rationalize better). For example, suppose you are a brilliant Nobel prize winning economist, how would you react? Let’s find out.

Ezra Klein at Vox ran a great profile on Dan Kahan’s work (recommended). Below is Paul Krugman’s response, titled appropriately enough “Asymmetric Stupidity“:

What Ezra does is cite research showing that people understand the world in ways that suit their tribal identities: in controlled experiments both conservatives and liberals systematically misread facts in a way that confirms their biases. And more information doesn’t help: people screen out or discount facts that don’t fit their worldview. Politics, as he says, makes us stupid.

But here’s the thing: the lived experience is that this effect is not, in fact, symmetric between liberals and conservatives.

And:

At this point I could castigate Ezra for his both-sides-do-it article — but instead, let me pose this as a question: why are the two sides so asymmetric? People want to believe what suits their preconceptions, so why the big difference between left and right on the extent to which this desire trumps facts?

One possible answer would be that liberals and conservatives are very different kinds of people — that liberalism goes along with a skeptical, doubting — even self-doubting — frame of mind; “a liberal is someone who won’t take his own side in an argument.”

Another possible answer is that it’s institutional, that liberals don’t have the same kind of monolithic, oligarch-financed network of media organizations and think tanks as the right.

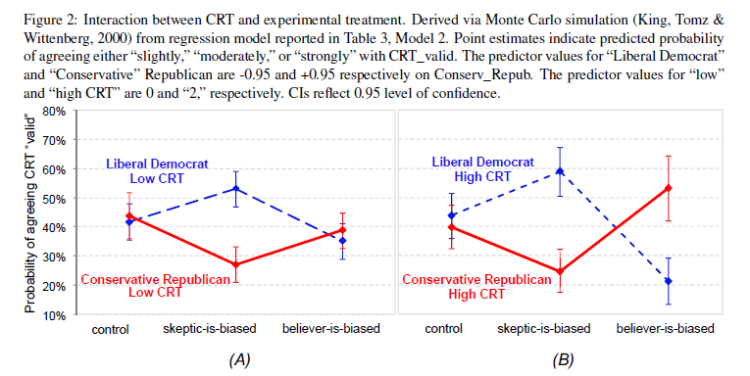

Whatever it is, I think it’s important: people are people, but politics doesn’t seem to have the same stupiditizing effect on left and right.

I’ll happily concede that in recent decades conservatives have had more ideological conflict with science than liberals. But the formal structure of Krugman’s argument has an almost Onion-like level of parody perfection. He rejects Kahan’s framing because…..it might equally well apply to his own tribe. And since his tribe is correct, he must reluctently conclude his own tribe consists of a better class of people. Kahan’s response was priceless. He wrote a very short post titled “Finally: decisive, knock-down, irrefutable proof of the ideological symmetry of motivated reasoning.” All his post has is one line: “Sometimes something so amazingly funny happens that you have to pinch yourself to make sure you aren’t really just a celluar automaton in a computer-simulated comedy world.” Then he has a screenshot of Krugman’s post above. Then he shows a chart from one of his recent papers testing Krguman’s exact hypothesis on the asymmetry of beliefs. See the chart below. As I mentioned, Kahan can be a bit terse. I had to read the paper to get it, but of course what the chart shows is the opposite of what Krugman contends. Not asymmetry but ideological symmetry. Even worse, it shows that those with better reasoning skills are more, not less, biased. Which of course Krugman, precisely because he is so very brilliant, is able to reason away. He proudly cities his “lived experience,” which one must assume consists mostly of people who share his own views. As it does for us all.

_______________________________________________

More Reading:

- Ezra Klein’s profile and interview on Vox mentioned above is really good. Start there.

- Dan Kahan’s blog.

- Dan Kahan on twitter.

- A good post by Robin Hanson on being choosy about where to apply your limited stock of rationality.

- Given how intractable arguments are involving identity politics, sports, emacs versus vi, iPhone versus Android, and other tribal subjects, I’ve always been fascinated by the subject. It seems to me there is a shallow and deep reading of this research. The shallow reading says great, now I know the common reasoning flaws so I’ll just avoid them. My thatens are gone. I’m cured. The shallow reading leads to hubris. The deep reading is to understand these flaws are baked into human nature itself. If it can happen to a Nobel Prize winner, it can happen to me. The deep reading leads to humility. And to be fair to Krugman, as mentioned, on many policy issues at stake today Conservatives do have more ideological conflict with science and hence more gaps. But this does not mean the cultural cognition problem applies only to people you disagree with. It’s universal.

- Some of my posts in a similar vein:

- Understanding Republican Science Denialism. Haidt is right. Mooney is wrong. – My post on Jonathan Haidt’s book The Righteous Mind. Haidt strikes me as very similar to Kahan. Haidt argues that all groups hold sacred beliefs, and when sacred conflicts with truth, the sacred wins. Big fan of his book.

- Living with a deep faith in science – Another Haidt influenced post, this time on science belief.

- Demonizing along your preferred axis – Review of Arnold Kling’s ebook essay The Three Languages of Politics. A great framework for understanding other political tribes besides your own.

It seems to me that the asymmetry is that, as is often said, facts have a liberal bias. If the tribal identity on one side requires science rejection, then they are likely to be often wrong about the facts. It also seems to me that tribal identity is stronger on the conservative side. As Krugman puts it in your quote, “a liberal is someone who won’t take his own side in an argument.” I would expect the distortions caused by a stronger tribal identity to be stronger.

I sometimes wonder if the politicization of global warming was inevitable. Maybe if the first prominent public figure pushing on this issue had been a famous conservative, rather than Al Gore, Republicans wouldn’t have been forced to treat this as a tribal matter. But maybe it was inevitable that one of the major parties would be captured by the CO2-producing lobby, and the Republicans were the better fit.

http://krugman.blogs.nytimes.com/2014/08/23/attack-of-the-crazy-centrists/

If Kahan is right (and the evidence seems to be that he is) then I suspect our efforts to pass CF&D (or any other effective climate legislation) are doomed. For the sake of my grandchildren, I suppose I can only hope that my own tribal bias has led me to a falsely trust the science that predicts bad outcomes if we continue our present fossil fuel dependency.

Not very comforting, I must say.