Last year I argued voice interaction would become the “God particle” of mobile. And in 2012 that voice was a natural overlay to the phone/tablet touch interface, much as the graphical interface was a natural overlay to the command line. So a true believer. It’s worth taking a fresh look at voice interaction as wearables (fitness bands, smart watches, health monitor pendants, clip ons) come into the picture. How will voice interaction work with wearables? Who wins? Who loses?

First let’s distinguish between voice interaction as a technology versus general artificial intelligence. I think General AI will happen, but at some unpredictable time from the present. Minimally decades. Possibly far longer. Whereas the technology of voice interaction (Natural Language Processing) is already in widespread use with Apple Siri and Google Now.

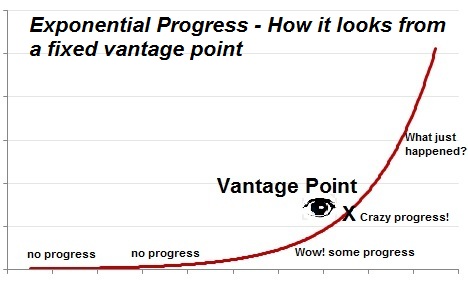

And once software gets into wide use, it often has an exponential improvement trajectory, driven by algorithmic breakthroughs coupled with Moore’s law. Especially during its initial growth phase. I explored this in detail in last week’s post, using computer chess as an example. But note that during the early portion of exponential growth, doubling doesn’t seem to do anything at all. Twice almost nothing is still almost nothing. Then as the technology approaches our reference vantage point (in this case human spoken language), doubling becomes magical.

So why aren’t more people freaking out? Expecting voice interaction with computers to become completely mainstream in say, 2-4 years? Some of this is just lack of imagination. But a more sophisticated critique is while improvements may be exponential, so might be the grading scale. For example perceived sound loudness fits a log scale. When you crank the volume from 10 to 11, you perceive a linear change. But in fact the power doubles. Perhaps natural language processing is like that. Doubling only gives a linear perception of improvement. In that case, we’ll never get an exponential lift off. All I can give is my impression, which is the year over year improvements we’re seeing in Google Now and Apple Siri are accelerating, and dwarf what we saw in something like Dragon NaturallySpeaking a decade ago. Or perhaps people aren’t paying attention because they’re easily bored. Longing for the new new thing just as the old new thing gets interesting. And starts having an economic impact. After all, the original iPhone only sold a million units a quarter, while the newer ones sell 50x that. So they’re boring.

What does this mean for wearables? First I’ll confess my bias. I recently got a free fitbit and never took it out of the box. I prefer tracking my running with a phone app or dedicated GPS watch. Long term of course there’s more to wearables. After all, Tim Cook has a fuelband. But short term, the skeptical takes from Ben Bajarin, Craig Hockenberry and John Gruber ring true to me. Let’s walk through some examples.

Google Glass. When Robert Scoble noted that Larry Page wasn’t wearing Google Glass recently at TED, John Gruber’s response was pretty funny: “Scoble, Scoble, Scoble. Glass is so 2012. It’s all about Android Wear watches in 2014.” Enough said.

Oculus Rift. Ok, I picked a snarky image. More seriously, like everyone else I’m convinced Oculus Rift is totally awesome and has a big future. But wearable? No. Fans distinguish between Virtual Reality (VR), sitting down and immersing in a synthetic environment, and Augmented Reality (AR), putting a visual overlay on what’s in front of you while you go about your day. Oculus Rift is VR. Not a wearable. Google Glass is AR. Wearable. These two overlap at a technology level, so the technology of Oculus Rift will transform AR as well. Long term I’m on board with Halting State Goggles. But from a usage and market point of view VR and AR are distinct. And right now VR is so much farther along.

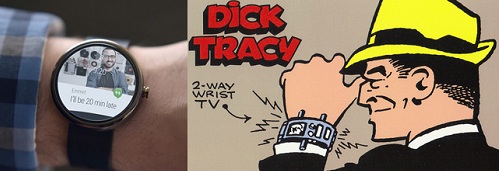

Android Wear. Google’s March 18 Android Wear announcement reminded me of a Dick Tracy watch. And not in a good way. The watch images in the video ad were simulated, and having a screen on your wrist seems like a battery nightmare. The idea is interesting. But I like Jan Dawson’s point that Google’s approach treats wearables as an outward secondary phone display. Whereas I see the phone as the digital hub, in Ben Thompson’s phrase. Wearables are sensors that should feed inward to the hub.

Nike Fuelband and Misfit Shine. Now we’re getting somewhere. Wearbles as activity trackers. Feeding your digital hub phone with steps walked, calories burned, and eventually heart rate, glucose levels and other health monitoring. All fine. My problem here is the latest phones have motion chips in them already, so can do basic activity tracking just sitting there in your pocket. The more advanced health sensors coming down the road are great, but leave this generation of wearables as niche products.

Let’s take a step back and ask what problem we’re trying to solve. Isn’t the real use case all about voice interaction? That was certainly the most compelling part of the Android Wear video demo. Normally people think vision when they talk about Virtual Reality and Augmented Reality. But what about augmented reality for audio? Call it Augmented Audio Reality. AAR.

The wearable technology for AAR of course already exists. It’s called a Bluetooth headset. For example the Jawbone Era below.

What distinguishes Augmented Audio Reality from traditional AR like Google Glass is human beings are truly capable of multitasking audio. People talk over one another all the time. And listening to the radio while driving a car, or a podcast while working around the house is no big deal. And far less rude. And by getting the audio only in your own ears, you don’t annoy those around you. This is the kind of thing you could wear all day every day. As MG Siegler discuses here. Batteries of course are an issue, but at least you don’t have to drive a retina display.

Nothing fundamentally new needs to be invented. Just refinement of what we already have. Better headsets. Better voice interaction (Apple Siri and Google Now). In fact we should look to hearing aids for wearable design ideas. See below. Low key, nearly invisible, not distracting. Opposite of Google Glass. In fact in many ways better than staring continuously down at your phone, which is what people do today (recall the image at the top of this post).

Let’s speculate how this would work. Assume we have wearable Bluetooth earbuds on all the time. Couple this with greatly improved Voice Interaction to put yourself into Augmented Audio Reality (AAR).

Life in an AAR world:

- Replace many touch interactions on your phone with voice. Let me give an example. My six year old likes to play games on the iPad, so a year ago I showed him how to ask Siri questions. The answers were pretty bad and he lost interest. When I showed him more recently with the improved Siri, he loved it. Asking math questions to stump the iPad. Asking for jokes. The delight was clear. Voice interaction is our primal way to communicate, with a million years of evolution behind it. It’s the default. If people can do it, they will choose do it.

- Audio “cards” or cues. This is the key to augmented audio reality. Today Google Now has visual cards that automatically show information on your phone tied to your current location and activity. And notifications. What happens when your phone allows you to disable/enable audio cards on topics of interest? No need to pull out your phone and rudely stare down. Or pretend to be Dick Tracy. Just quietly ask for and get the information you want. Menu recommendations, shopping advice, recalling someone’s name, suggestions on where to go and what to do, catching a cab, weather reports, sports scores, etc. And the privacy issues with surreptitiously recording audio already exist with today’s phones, so while important it’s not a change. Different from video.

- Shopping – Once computer voice interaction really works, people will go from question to purchase just by talking with their phone. Whoever owns that channel owns both the advertising and the product selection. Serious money.

- Travel – instant translation in your earbuds. Step off the plane in a foreign country. Talk to a random person in your native language (assuming they also have their earbuds in). They automatically get a translation in their earbuds. They talk back. You hear a translation in your earbuds. No set up at all. Just works. An augmented audio reality where you can understand all languages on the planet. Awesome. And wonderful for anyone anywhere working a customer service desk.

- Active noise cancellation. A 2.0 version could actively mute not just annoying sounds, but with powerful enough signal processing even particular people. Not too far fetched down the road. Everyone knows someone or something they wish had a mute button. Want to mute the screaming baby next to you on plane? Now you can.

- Tourism – museums, guided tours, anything where location is known and you want information about the spot you are in.

- Social – If you think social apps line LINE and WeChat have a lock now, wait until always on earbuds are common and you can jump from text chat to voice frictionlessly.

- Sports – group chat and trash talking during the big game. Use social to join a group session.

- Music identification – services like Shazam are great at identifying music. But pulling out your phone and launching the app is often not worth the trouble. With always on bluetooth headsets in place, this becomes frictionless. Just ask the ether for a song title and any details you want, and get the answer whispered in your ear.

- Push to talk 2.0. Current push to talk is annoying because everyone hears. If the sound goes to your own ears alone, it’s a lot more seamless and useful. Kids will use it to flirt and gossip.

- Hearing disability – my hearing is a little weak. Partly age, partly very loud bands from my misspent youth. Perhaps that’s why the idea of always on earbuds, which would de-stigmatize hearing aids, seem like such a good idea. I’ll be wearing them soon enough anyway.

- Text input – Wikipedia says typing speed is typically 40-80 words per minute. While voice and hearing are much faster, at 150 words per minute. I know our generation likes typing at the keyboard. But with Touchscreen + Voice why would kids bother to learn qwerty keyboards? Note: reading is still optimal for absorbing information, at 250-300 words per minute. So writing well will continue to matter, even if you compose your text verbally. In fact why wouldn’t the next generation of computer languages be optimized for voice + touch input? Keyboards would become relics for old school programmers.

- Health. Your ear is an ideal place to monitor temperature, pulse, possibly even blood sugar levels. While most bluetooth headsets will be just voice, it’s easy to imagine a deluxe version that adds in health and activity monitoring. And if you already have bluetooth earbuds on all time the anyway, why buy an extra device?

So who wins and who loses? Google is of course potentially the biggest winner. As voice interaction becomes critical, Google could leverage their lead in voice to take global market away from forked Android, which lacks integration with Google Now. Then bypass intermediaries and steer Android customers directly to the voice ads they want. Benedict Evens asks a great question:

I could easily imagine Tony Fadell making awesome Google Now wearable earbuds. Ones that work not just with Android, but also with iPhone using a Google Now app. But Google Now on Apple devices couldn’t be as good as on Android because deep voice interaction requires access to contacts, location, camera, microphone, maps and launching other apps. Google wouldn’t mind. But Apple wouldn’t allow it. Hence the larger strategic threat to iOS. They may lose the premium end of the market during the voice interaction era.

With that said, improvements to Siri have been far better of late. Likely it will play out similar to maps. A ding against Apple, but “good enough” so most people won’t switch. Globally, we should expect regional players to jump into the action (especially in Asia) once the voice interaction market is validated.

Back in the day people argued a lot about whether command line or GUI was better. No doubt people will do the same for voice versus touch interface. But start ups with a focus on voice will have a strategic edge in this battle, just as early adopters of GUI software had over command line vendors. And in a decade or two down the road, you may not even need the phone itself. At the end of Ben Thompson’s excellent digital hub piece, he speculated eventually a wristband based iWatch might take over the phone’s role of personal digital hub. But consider. A behind the ear headset could have enough computing power to do that as well. iEar sounds like a terrible name, but I wouldn’t put it past Apple to eventually create a device along those lines. In the larger sweep of history, the introduction of Siri/Google Now may loom larger than the introduction of the iPhone itself. That’s seems crazy today. But don’t rule it out completely.

Finally, for (an utterly unfair) validation that voice technology is about to break out, and it’s three years too late to join the party, consider this recent announcement: “Cortana, Microsoft’s answer to Siri.”

_______________________________________

Footnote: To be clear, I’m a huge fan of the changes at Microsoft under Satya Nadella. Office for iPad and all the rest. A strong Microsoft is good for everyone. Just wanted to have some fun with the last line.

Here are my other posts on voice interaction:

- Apple’s strategy tax on services versus Google. Voice interaction becoming the “God particle” of mobile.

- Beyond the Touchscreen Interface, to Voice Interaction

- What Chess and Moore’s Law teach us about the progress of technology

- The debate on Strong Artificial Intelligence and conscious computers (poor title choice on this last one, it’s actually about how everyone will believe computers are conscious even when they are not, once people talk to them all the time)

Nathan, since you said you don’t read my comments, since they are off-topic. I’ll post this for anyone else who will read them.

Others said it first:

– Your God Particle essay made me a believer. Although Star Trek demo’ed it first. Star Trek which is soft scifi, a genre that would be unprintable drek, according to one of your essays.

– People will become illiterate. Basically a trope in scifi futurism.

I already thought these, so both of us think this:

– Convergent phone is hub of any Kurzweil multiplicity of devices

– AR and VR are different markets

– Battery life is flaw of smart watches

– Oculus Rift is astounding. (Synchronicity: I tweeted about the SDK the same day Mark decided to buy the company.)

I disagree:

– I never used it seriously, but Dragon NaturallySpeaking had good voice recognition once it was trained to an individual

– My $20 Peltor ear muffs + $10 earbuds block noise better than my $300 Bose noise cancelling headphones. Consumer noise cancellation is crap.

Others disagree:

– Humans can multitask audio: “26 percent of all crashes are tied to phone use, but noted just 5 percent involved texting. Safety advocates are lobbying now for a total ban on driver phone use, pointing to studies that headsets do not reduce driver distraction.” – http://beta.slashdot.org/story/199993 But source is Slashdot so be skeptical. For example 8 months earlier they posted a summary saying the opposite.

– On HN, commenters said Office for iPad began under Ballmer. (I don’t have any other information.)

Others diverge off-topic:

– NPR this morning had a minority tech guy talking about selling bluetooth ear pieces for people who can’t afford headphones.

You raise a question:

– Is language processing difficulty exponential? Given my disagreement about Dragon, I think not really.

-Saber

s/afford headphones/afford hearing aids/;

I can’t imagine voice being a primary UI. It’s great for the car, it’s a good augment at home, but I don’t wanna be dictating texts or emails in public. Think about all the places you go in a day where you don’t really wanna be speaking in monotone to your device. It’s a weird, aural uncanny valley.

The ear form factor makes some sense, though I suspect in-ears have never been a popular place for jewelry or wearables for a reason. It’s sort of an awkward, uncomfortable place to put something. Wrists, fingers, necklaces, ankles… Those are natural, and they have tradition to them.

Speaking generally, I love all the experimenting going on in the wearables space, especially all the thoughts coming from the blogosphere. I don’t like how unambitious the tech giants have been so far, I think Android Wear is a terrible piece of corporate conceit, and the Galaxy Gear is depressingly, pitifully opportunistic. The most interesting thoughts have actually come from bloggers like Ben Thompson, Chockenberry, Sammy the Walrus, and yourself.

Though I must say, with all due respect to everyone who has written about wearables, I haven’t yet heard one feature, one insight, or one genuine creative breakthrough that could actually result in a product I wanna buy. Everything that has been written is purely theoretical, no real product ideas.

The only remotely interesting ideas have come from Apple speculation — something combining the intimate BLE interaction of beacons with breakthrough health monitoring capabilities. I see beacons combined with these advanced sensors as being as nearly unlimited in possibilities as the App Store. There’s going to be a beacon for everything soon.

Well, I could definitely be wrong about in ear wearables. Also agree on Beacons. With that said, think the market for voice interaction as primary interface is mostly for people who find smartphones intimidating. A supplement for power users, like the people who read tech blogs. 🙂

Lol, I make my criticisms with full understanding of how easy it is to be an armchair Steve Jobs and how hard it is to actually build something, or to even write about a subject like you have here. It’s a great piece.

Do you find smartphone intimidation to still be prevalent? You sure this isn’t one of those “death will take care of this” situations? I’d love to know how often Siri and Now are currently used. There isn’t any data on this, is there? Thanks.

http://earin.se/ Nathan – your future is getting closer!