Ben Thompson starts off his Peak Google post saying “Despite the hype about disruption, the truth is most tech giants, particularly platform providers, are not so much displaced as they are eclipsed.” By this he means old platforms and companies don’t fail or go away. They continue to dominate their old platforms. It’s just that new companies create new platforms that are so much bigger they eclipse the old ones. His examples are IBM mainframes being eclipsed by PCs, and PCs being eclipsed by smartphones. I want to pause here to note that both of his eclipse examples are driven by the invention of new and more personal input methods. Yes, it’s true PCs continued using command line input at first. But once PCs shifted to mouse/keyboard and graphical interfaces, IBM dropped out and PC use exploded. We entered the Microsoft era. For smartphones of course the input shift was moving to touchscreen interfaces, where Apple iOS and Google Android now dominate. History seems to show that computer platforms have such strong lock-in the original owners never lose control. Instead what happens is new entrants have a window of opportunity to eclipse old platform owners when new and more personal input methods become technically feasible.

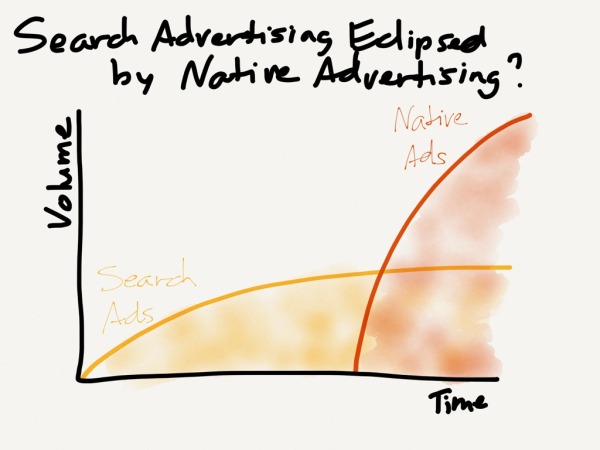

Using his eclipse framework, Ben Thompson then asks whether Google’s dominance in web search advertising leaves it with no room to grow, with web search becoming eclipsed by native ads. For native ads think Facebook ads that show up in natively in your timeline just like regular posts. These ads aren’t on the web, so Google web search doesn’t play in this space. And since native ads are a natural fit to the far larger brand advertising market, it’s possible Google may be eclipsed in the next era. Thus Peak Google.

Enter Apple Watch. In Apple Watch and Continuous Computing Ben Thompson says “I can’t quite shake the feeling that the Apple Watch is being serially underestimated. Nor, I think, is the long term threat to Apple’s position being fully appreciated.” He then describes that threat as below, kindly linking to one of my posts in his original of this paragraph:

More broadly, it’s clear that what the mouse was to the Mac and multi-touch was to the iPhone, Siri is to the Watch. The concern for Apple is that, unlike the others, the success or failure of Siri doesn’t come down to hardware or low-level software optimizations, which Apple excels at, and which ensures that Apple products have the best user interfaces. Rather, it depends on the cloud, and as much as Apple has improved, an examination of their core competencies and incentives argues that the company will never be as good as Google. That was acceptable on the phone, but is a much more problematic issue when the cloud is so central to the most important means of interacting with the Watch.

In fact let me quote from my original “God Particle” post from 2013. It was of course written before Apple Watch came out, and before we learned voice is a far more natural and compelling interaction for watches than phones:

If Google maintains their lead in voice, and voice interaction becomes as common as I expect, then Nexus Android becomes the de facto premium phone. Voice interaction as the “God Particle” of mobile is explicitly clear to anyone who talks to Google about it. Apple knows this of course. We should never expect Apple to be best in services across the board. Rather we should ask if Apple can overcome their general strategy tax on services to make an exception for voice interaction, making it competitively first class.

The historical red warning flag indicating a platform shake up is possible is the rise of another new and far more personal input method for computers: voice. Recall Apple acquired Siri way back in 2010 and released it in 2011. Why is this threat happening now? It’s not just the Watch form factor, though of course the watch itself is a new and more personal platform and interface as well (glances, taps, crown, force touch, complications). But for voice I think the answer lies in understanding that Siri, or more broadly the technology of Natural Language Processing (NLP), has been making steady Moore’s law exponential progress for years. And this relentless exponential sustaining innovation is starting to crack thresholds of usability which make voice far more powerful and potentially disruptive than when it first shipped. And we can expect this exponential improvement in NLP to continue apace.

Stefan Constantine once remarked there are only two important events in the annual technology calendar: Google’s I/O and Apple WWDC. Last week was Google I/O, where Google announced Now on Tap, featuring an ability for voice interaction to work while another app is running. Their demo asked Google Now “What’s his real name?” while a Skrillex song was playing. It got the right answer based on context. Google is no longer about search as such. As they said in the annual securities filing “Now we are increasingly able to provide direct answers — even if you’re speaking your question using Voice Search — which makes it quicker, easier and more natural to find what you’re looking for.” And next week at WWDC, Apple is expected to announce Proactive, which “will leverage existing features such as Siri, Contacts, Calendar, Passbook, and third-party apps ‘to create a viable competitor to Google Now’.”

What’s starting to happen is voice interaction is becoming a new controlling layer that sits above all the apps on your watch and phone. And this layer plugs directly into the cloud services of your particular phone/watch platform provider. So your provider will decide what you get back from the internet as below:

This picture is fine for now since voice is not used that often. But it’s a bit more disturbing if you project the technology forward another decade to when voice is one of the most common ways to interact with computers.

Let me quote John Gruber on Google’s I/O event:

I think what Google’s I/O keynote was about is a post-mobile world. It’s about ubiquitous computing that is contextually-aware and identity-aware — about Google knowing who you are, where you are, and what you’re doing at all times. From your doorknob to your desktop. That’s not Google surpassing Apple at “mobile design”. That’s Google beating Apple to a world where anything and everything is a networked computing device.

And

To me, this week’s I/O keynote made me more convinced than ever that Google is turning into the Microsoft of old: a company whose ambitions are boundless, who wants its fingers in every single pie, and who wants to do it all on its own. A company whose coolest stuff is always in the form of demos coming in the future, not products that are actually shipping now.

Though I would use a less harsh tone, I agree with the above except for the very last sentence, at least as applied to Google Now (though it’s correct for Google Glass and many other Google products). Google Now is already shipping and riding a technological tidal wave of machine learning. Let’s tie this back to the discussion on native ads. If Google owns the voice interaction channel to the internet, and can do branded “native ads” whenever someone talks into their phone or watch, then Peak Google is solved. Google will be launched into the next wave without being eclipsed. Billions are (potentially) at stake. Where’s the closest restaurant I’ll enjoy? What’s the best toothpaste to buy? How much are tickets to the game? What apartment can I afford to rent? What kind of car should I buy? Who should I marry? Except for that last question, I’m sure Google will eventually be capable and quite happy to answer. With proper brand product placement of course. And a small finder’s fee owed by the end vendor for any purchase. As Google becomes the front end to a potentially huge new voice interaction distribution channel, they’ll take their cut.

To be clear, voice interaction is additive to all the existing input methods we already have. So the old stuff will stick around: touchscreen, mouse, touchpads, keyboards, etc. It’s just that voice requires zero training and is super convenient. In fact the NLP machine learning engines that handle voice should also work with text messaging as well. Often text is far more convenient: in crowds, when you want asynchronous responses, want a written response, etc. Never bet against text. There are a ton of startups right now exploring text messaging interfaces to get things done, e.g., Magic. But I think the platform owners have a natural advantage here, just like they do in voice NLP interaction, because they have scale plus the deep platform privileges to do things at the OS level. No doubt a text message interface to Google Now and Apple Siri will arrive at some point.

Thinking this through, it’s pretty clear that once Google starts using voice interaction to steer consumer spending at scale, they’ll get hit with more antitrust and privacy lawsuits. Just like the ones they are already fighting for steering people with web search results. Particularly true for Europe. On the other hand, NLP is an awesome and really useful technology that will help not just for the richest parts of the world, but also the poorest. A text message interface to a Google NLP AI would be an incredibly cheap way to help the poorest billion people on the planet. In the end we’ll see voice and text NLP embraced as just another way to interact with computers, even if that way ultimately gets determined through lawsuits and regulations.

On to privacy. Apple CEO Tim Cook went after Google this week saying “You might like these so-called free services, but we don’t think they’re worth having your email, your search history and now even your family photos data mined and sold off for god knows what advertising purpose. And we think some day, customers will see this for what it is.” Well. At some level I think he’s being honest. Apple does care about privacy. But at another level he sounds a bit clueless as to which way the wind is blowing. It’s also very easy to say you don’t sell data when your business model is selling devices, and all your cloud products are inferior to the free ones you’re disparaging which so many people happily rely on. Sorry for so many Ben Thompson links and quotes in this post, but please allow one more since it’s pithy. Thompson calls this a Strategy Credit (as opposed to a Strategy Tax). It’s a credit you can claim for something you’d do anyway because of how your business model works. My personal view on privacy is for both good and bad we’re slowly headed towards the transparent society, where corporate computers track most of what we do. My post here. Hence I’m personally non-plussed about Google offering amazing free services at the price of tracking your data and advertising. For example when Comcast had a DNS outage last week where I live, I pointed our family router to Google DNS. I’m not too concerned they’ll now learn how often my daughters play minecraft. Something which in theory another big corporate entity, Comcast, already could have tracked. With that said, I think it’s a quite respectable view to be angered and opposed to this slow erosion of privacy. And certainly tech regs for your personal data are something everyone should support. But the bottom line here is I think history is not unfriendly to Google’s approach, even though Google will inevitably (and rightly) attract privacy and antitrust lawsuits.

Apple and Google’s differing business models and corporate cultures are also pushing them toward different flavors of voice interface. To re-apply an analogy from my recent AI Risk post, I think Apple is tending towards a more humane interaction, like Samantha from the movie Her. While Google has flat out declared “Our vision is the Star Trek computer. You can talk to it—it understands you, and it can have a conversation with you.” No nonsense, that Star Trek computer.

And it’s not just Apple and Google. See this impressive video from SoundHound. Or Amazon Echo. And of course there’s Microsoft Cortana, which I left in my diagrams above for a reason. Apple and Google are both consumer companies. And while Google often gets called the new Microsoft, Microsoft by contrast is and has always been an enterprise software company. And businesses need voice interaction too. Why not something like Cortana for business?

The question remains whether this new interaction model is different enough that new platforms and companies will arise to eclipse the old, or whether the existing platform leaders can slot voice into their ecosystems without major disruption. To answer, I want to go back to when I was a kid and got my first computer, an Apple II+. One of the programs I played with was ELIZA, one of the very first chatbots. It was rather dumb, just repeating back what you said as a question with slight changes. And I didn’t really enjoy it that much until I changed the code to make it curse. A lot. But it was also surprisingly fun and enjoyable. I still recall the delight when it provided an answer that was fun or made sense. In fact Joseph Weizenbaum, the inventor of ELIZA in 1966, surprisingly wound up hating it. Since I’m quoting old posts today, let me dip back to a three year old 2012 post about Weizenbaum, which also outlines how voice interaction might play out:

What’s fascinating about Weizenbaum is that he found one of his assistants pouring her heart out to ELIZA late one night, and it disturbed him so much that a real human could pour their heart out to a machine that he began a crusade against AI that lasted the rest of his life. He went overboard of course, but Weizenbaum was on to something. Humans have a hard wired capacity to interact with others as social beings, and that capacity is so automatic and innate that it leaps into action even with a machine whether we want it to or not.

So my prediction is that the next real leap in human/computer user interaction is that tablets and phones will learn to talk. And just as the graphical interface and mouse neatly layered on top of the older command line text interface without much disruption, so will voice interaction perfectly overlay the existing tablet-phone user interface. A perfect fit. And if this analogy holds, the companies leading the tablet market today are well positioned to jump across this transition, just as Microsoft was able to jump across from command line DOS to the newer graphical interface market. This means not only are Google Android and Apple iOS going to continue to dominate in the phone-tablet space, they are also like to lead in the voice interaction era to come, which will be bigger than ever.

This seems even more true now than it was 3 years ago. Sure, Apple’s cloud ability is second best, but they are making huge strides and arguably the gap to Google is smaller than it was 3 years ago. And Apple Watch shows off Apple’s huge design strengths in bringing new product categories to market. This is more than enough to compensate for Apple’s other weaknesses. Overall Apple products remain premium, if somewhat under threat. Google is happy to allow their own products on Apple’s platforms given the premium customers. And despite or perhaps because of Google’s technical genius, their design understanding of what most people want remains rather marginal. The most likely outcome for voice interaction is not major disruption but adoption by the existing platform leaders. Though with some real, if not huge, market shifts.

Finally, if I may, I’d suggest Google not so closely identify with their talking computer. In fact they should stop their current “OK Google” and “Google Now” ego trip. If your design goal is to have your talking computer treated exactly like a real person, you need to give it a non-corporate name. Real people have real names. My suggestion would be something that matches Google’s culture and personality. Perhaps, well, how about “Spock”? Though on second thought that might already be taken.

11 comments