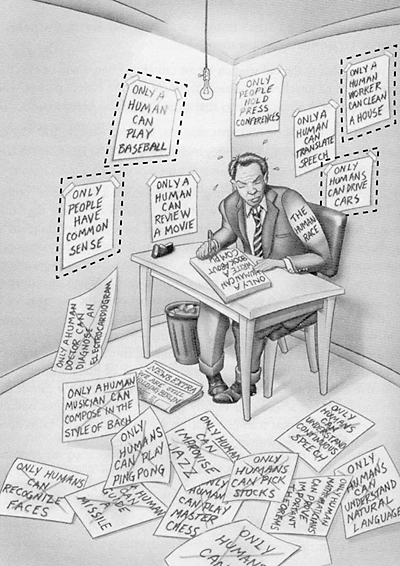

The cartoon below is my favorite version of the sentiment that Artificial Intelligence (AI) is defined as anything computers can’t do….at least yet.

It’s from Ray Kurzweil’s 1999 book The Age of Spiritual Machines. In a narrow sense, this is exactly correct. Chess went from AI to just another algorithm in 1997. A similar thing is happening now with Go. And self driving cars, on the wall in the cartoon above, will fall shortly.

While I used to find this framing clever, recently I wish people would stop. Why? Because defining AI as “anything computers can’t do” inadvertently implies AI is sisyphean field, forever chasing the red queen, never moving towards a destination. This is wrong. There’s an obvious destination. Look to fiction. In the movie 2001: A Space Odyssey, Dave talks to (the conflicted) computer HAL. In Star Trek, Captain Kirk talks to the Star Trek computer. In Star Wars, Luke has R2D2 and C3PO. In the movie Her, Theodore talks to and falls for the engaging Samantha. In fiction AI always means talking computers. This is why the recent progress in deep learning, natural language processing and conversational interfaces is so exciting. Google Assistant, Apple Siri, Amazon Alexa, Microsoft Cortana, etc., are starting to directly chase our imagination.

The underlying issue here is terminology. Narrow Artificial Intelligence is doing a particular task: predicting the weather, recognizing faces in images, playing Go, driving a car. Once achieved, narrow AI always becomes just another technology. As it should. Artifical General Intelligence (AGI) is defined as being able to do things humans can do, and in particular being able to self-learn new things. This is a real and fixed end goal. And in our fictional imagination achieving this goal has always been about creating talking computers.

Defining AGI – mind as prediction, following Jeff Hawkins

Of course this just pushes the problem up a level. Nobody agrees what intelligence is. Much less artificial general intelligence. In the spirit of strong views, weakly held, I’ll strongly advocate for Jeff Hawkins’ view of AGI, from his excellent book On Intelligence published in 2004. Hawkins takes his inspiration for intelligence directly from the biology of the brain. He argues prediction is the centerpiece of mind and intelligence. Here’s a passage from p. 104 of his book:

The human cortex is particularly large and therefore has a massive memory capacity. It is constantly predicting what you will see, hear, and feel, mostly in ways you are unconscious of. These predictions are our thoughts, and, when combined with sensory input, they are our perceptions. I call this view of the brain the memory-prediction framework of intelligence.

If Searle’s Chinese Room contained a similar memory system that could make predictions about what Chinese characters would appear next and what would happen next in the story, we could say with confidence that the room understood Chinese and understood the story. We can now see where Alan Turing went wrong. Prediction, not behavior, is the proof of intelligence.

Getting into the deteails of Hawkins’ view of intelligence, and his approach to hierarchical temporal memory (HTM) will have to wait for another post. Or read his book! And to be clear, I’m not claiming his company Numenta will be more successful at AI than massive players like Google. Having insight into the biology of intelligence is different from creating working technology that mimics it, which is different again from creating a successful business. But I’m a fan, so we’ll see what happens. The point I want to emphasize here is mind as prediction. You predict and act to make predictions you desire to come true. The ball comes at you. You predict you catch it, and automatically move your arm and hand to do so. If you drop the ball, with repeated practice you learn to predict and catch better. This is not a static view of intelligence, but a view where sense data constantly flows in, and predictions of how your interactions affect it constantly flow back out.

So what does this all mean?

I’d summarize my thoughts as below:

- Intelligence is not a platonic ideal, but something which should be measured on a sliding scale and in context. Insects have mind, they predict and act. Self driving cars also predict and act in the world. In that limited sense both insects and self driving cars have some intelligence, at least within their narrow context. Insects of course are far more flexible and general. For now.

- Creating AGI is about building more and more complex self training prediction technology about what happens in the world, and predicting how actions will change things. Note: I’m sorry to inform my past sophomore college drunk self up way too late at 3am, but there is no super deep mind-body philosophical secret to unlock here. It’s merely (very very very complicated) engineering.

- Narrow AI, once solved, becomes just another technology. Yes. But belaboring this point is not as much fun as it used to be. Especially as computers are starting to talk. And taking AGI as a memory-prediction framework, it’s easier to see how narrow AI which self learns and self predicts (even if only within a limited context) is a step foward.

- True AGI means generalized real time prediction based on self learning from incoming data streams, with predictions of how actions will change things. So for example the work on Google tensorflow around prediction and prediction differences is key (example). Static pattern matching and identification of cats is awesome, but self trained real time prediction/action/correction is the future.

- AGI will be built first on real time modeling/predictions of the physical world, which is easier than predicting people. E.g., self driving cars. Though even self driving cars have to predict the behavior of pedestrians.

- Talking computers at first will be fairly dumb, similar to the Star Trek computer. With limited context on understanding what you are thinking. But these assistants will still be extremely extremely useful (just as the Star Trek computer is useful to Kirk).

- In some sense voice can be considered the final computer interface, as it’s the one humans are highly tuned by evolution for using to communicate and coordinate. Talking computers are a big deal. Much will change. And yes VR interfaces will matter, but I’d argue a computer that can talk to me is a bigger deal. Though computers talking to me in VR will be pretty awesome.

- I view smart chatbots as a form of natural language interaction, in written form. Likely companies will start with a base in one or the other, but then immediately push out into the adjancet area to provide a complete chat/voice solution. I suspect voice is harder than text, and so may give an advantage to companies with a base there, but we’ll see.

- Getting true AGI as envisioned in science fiction means the computer can model not just the physical world, but has a theory of mind. That is, it can to some extent predict what people might do next depending on what they do and say. This is a super hard problem. It will take a while. But just having Alexa be smart enough to know what I mean if I (as a particular individual) ask “who won the game last night?”, and it knows which game I mean, shows there’s already a solid business model to push this technology forward.

- Privacy? Well, computers will know everything about you so they have the context to serve you. Your favorite shows, where you go, where you stay, likes, dislikes, food, movies, etc. As always with privacy, many profound hot take think pieces will be written. And as always, they’ll be ignored as people display their revealed preference.

- What about General AI that directly or inadvertently kills all humans? We’re talking Skynet Terminator scenario. This is so contingent it’s hard to say. But the good news is given human economic incentives, whatever general AI does eventually, it will definitely be able to talk to us and understand us. So at least we can talk to the Terminator before it shoots us. Or if it’s friendly, we can sit down and have a nice beverage and a chat.

- Hawkins believes the neocortex has a single flexible and unified set of processes by which it does all its predictions: sight, touch, sound, movement, language. If this is correct (and until someone convinces me otherwise I’m on board), this means that once this technology becomes completely genearlized, we will have a general purpose learning algorithm which can be applied to all sorts of problems. Including modeling people, and get true AGI.

The next decade or two should be a lot of fun.

_________________________

Appendix – extra reading, if you feel a need for more links.

- Jeff Hawkins argues his company’s approach is better than traditional neural networks like deep learning. Again, I’m not sure this will win out, but the comparison is interesting.

- Jeff Hawkins video from December 2014. He outlines his thoughts on how brains work and machine learning. The video is 40 minutes long. I recommend it. I also took a couple screen grabs (see at bottom of post).

- It’s worth noting if you were not aware that Jeff Hawkins is the same person who founded Palm Pilot and Handspring (Treo). He’s always been interested in brains and intelligence, so has been doing that instead of smartphones since about 2002 or so. His book came out in 2004, and he founded Numenta in 2005. I saw him speak in a smaller setting just before the Treo came out (maybe 2000?). Down to earth. Impressive. Of course he could be wrong about all this, but he’s worth taking seriously.

- My related posts.

- Understanding AI risk. How Star Trek got talking computers right in 1966, while Her got it wrong in 2013.

- 2015 is a transition year to the (somewhat creepy) machine learning era. Apple, Google, privacy and ads.

- The surveillance society is a step forward. But one that harkens back to our deep forager past.

- I also did some posts on voice interfaces and conciousness back in 2012 before I was writing all that well on my blog. But take a look if interested.

- Beyond the Touchscreen Interface, to Voice Interaction

- Consciousness and Free Will

- The debate on Strong Artificial Intelligence and conscious computers

- Minor note: if you read this far, you may have seen I accidently sent out a post called “Age of Em” on June 8. It was a blank draft so I deleted it. Hopefully I’ll squeeze in some time to write about Robin Hanson’s book as I really enjoyed it.

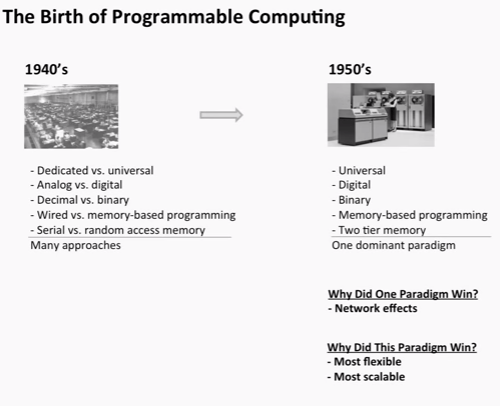

Screengrabs from Jeff Hawks video mentioned above, which draw a parallel between special purpose and general purpose computing, and special purpose and general purpose AI.

I’m on board too, especially with your views on privacy and revealed preference!

You can think of AI as another runtime OS. First there was Windows and Apple OS, then the Internet, and next will come AI. Let’s have a margarita and talk about it.